Kubernetes is all about containerization. Is that enough to make rightsizing and cost savings happen?

Running more workloads on the same server instance might seem more cost-effective.

But tracking which projects or teams generate Kubernetes costs is hard. So is knowing whether you’re getting any savings from your cluster.

But there’s one tactic that helps: autoscaling. The tighter your Kubernetes scaling mechanisms are configured, the lower the waste and costs of running your application.

Read on to learn how to use Kubernetes autoscaling mechanisms to drive your cloud costs down.

And if autoscaling is no mystery to you, take a look at these tips: 8 best practices to reduce your AWS bill for Kubernetes

How autoscaling works in Kubernetes: Horizontal vs. Vertical

Horizontal autoscaling

Horizontal autoscaling allows you to create rules for starting or stopping instances assigned to a resource when they breach the upper or lower thresholds.

Limitations:

- It might require you to architect the application with scale-out in mind so that distributing workloads across multiple servers is possible.

- It might not always keep up with unexpected demand peaks, as instances take a few minutes to load.

Vertical autoscaling

Vertical autoscaling is based on rules that affect the amount of CPU or RAM allocated to an existing instance.

Limitations:

- You’ll be limited by upper CPU and memory boundaries for a single instance.

- There are also connectivity ceilings for every underlying physical host due to network-related limitations.

- Some of your resources may be idle at times, and you’ll keep paying for them.

Kubernetes autoscaling methods in detail

1. Horizontal Pod Autoscaler (HPA)

In many applications, usage may change over time. As the demands of your application vary, you may want to add or remove pod replicas. This is where Horizontal Pod Autoscaler (HPA) comes in to scale these workloads for you automatically.

What you need to run Horizontal Pod Autoscaler

HPA is by default part of the standard kube-controller-manager daemon. It manages only the pods created by a replication controller (deployments, replica sets, and stateful sets).

To work as it should, the HPA controller requires a source of metrics. For example, when scaling based on CPU usage, it uses metrics-server.

If you’d like to use custom or external metrics for HPA scaling, you need to deploy a service implementing the custom.metrics.k8s.io or external.metrics.k8s.io API. This provides an interface with a monitoring service or metrics source. Custom metrics include network traffic, memory, or a value that relates to the pod’s application.

And if your workloads use the standard CPU metric, make sure to configure the CPU resource limits for containers in the pod spec.

When to use it?

Horizontal Pod Autoscaler is a great tool for scaling stateless applications. But you can also use it to support scaling stateful sets.

To achieve cost savings for workloads that see regular changes in demand, use HPA in combination with cluster autoscaling. This will help you reduce the number of active nodes when the number of pods decreases.

How does Horizontal Pod Autoscaler work?

If you configure HPA controller for your workload, it will monitor that workload’s pods to understand whether the number of pod replicas needs to change.

HPA uses the mean of a per-pod metric value to determine this. It calculates whether removing or adding replicas would bring the current value closer to the target value.

Example scenario:

Imagine that your deployment has a target CPU utilization of 50%. You currently have five pods running there, and the mean CPU utilization is 75%.

In this scenario, HPA controller will add three replicas so that the pod average is brought closer to the target of 50%.

Tips for using Horizontal Pod Autoscaler

1. Install metrics-server

To make scaling decisions, HPA needs access to per-pod resource metrics. They’re retrieved from the metrics.k8s.io API provided by the metrics-server. That’s why you need to launch metrics-server in your Kubernetes cluster as an add-on.

2. Configure resource requests for all pods

Another key source of information for HPA’s scaling decisions is the observed CPU utilization values of pods.

But how are utilization values calculated? They are a percentage of the resource requests from individual pods.

If you miss resource request values for some containers, the calculations might become entirely inaccurate. You risk suboptimal operation and poor scaling decisions.

That’s why it’s worth configuring resource request values for all the containers of every pod that’s part of the Kubernetes controller scaled by HPA.

3. Configure the custom/external metrics

Custom or external metrics can also serve as a source for HPA’s scaling decisions. HPA supports two types of custom metrics:

- Pod metrics – averaged across all the pods, supporting only target type of AverageValue,

- Object metrics – describing any other object in the same namespace and supporting target types of Value and AverageValue.

When configuring custom metrics, remember to use the correct target type for pod and object metrics.

What about external metrics?

These metrics allow the HPA to autoscale applications based on metrics that are provided by third-party monitoring systems. External metrics support the target types of Value and AverageValue.

When deciding between custom and external metrics, it’s best to go with custom because securing an external metrics API is more difficult.

2. Vertical Pod Autoscaler (VPA)

Vertical Pod Autoscaler (VPA) is a Kubernetes autoscaling method that increases and decreases the CPU and memory resource requests of pod containers to match the allocated cluster resource to the actual usage better.

VPA replaces only the pods managed by a replication controller. That’s why it requires the Kubernetes metrics-server to work.

It’s a good practice to use VPA and HPA at the same time if the HPA configuration doesn’t use CPU or memory to identify scaling targets.

When to use Vertical Pod Autoscaler?

Some workloads might experience temporary high utilization. Increasing their request limits permanently would waste CPU or memory resources, limiting the nodes that can run them.

Spreading a workload across multiple instances of an application might be difficult. This is where Vertical Pod Autoscaler can help.

How does Vertical Pod Autoscaler work?

A VPA deployment includes three components:

- Recommender – it monitors resource utilization and computes target values,

- Updater – it checks if pods require a new resource limits update,

- Admission Controller – it uses a mutating admission webhook to overwrite the resource requests of pods when they’re created.

Kubernetes doesn’t allow dynamic changes in the resource limits of a running pod. VPA can’t update the existing pods with new limits.

Instead, it terminates pods using outdated limits. When the pod’s controller requests a replacement, the VPA controller injects the updated resource request and limits values to the new pod’s specification.

Tips for using Vertical Pod Autoscaler

1. Use it with the correct Kubernetes version

Version 0.4 and later of Vertical Pod Autoscaler needs custom resource definition capabilities, so it can’t be used with Kubernetes versions older than 1.11. If you’re using an earlier Kubernetes version, use version 0.3 of VPA.

2. Run VPA with updateMode: “Off” at first:

To be able to configure VPA effectively and make full use of it, you need to understand the resource usage of the pods that you want to autoscale. Configuring VPA with updateMode: “Off” will provide you with the recommended CPU and memory requests. Once you have the recommendations, you have a great starting point that you can adjust in the future.

3. Understand your workload’s seasonality

If your workloads have regular spikes of high and low resource usage, VPA might be too aggressive for the job. That’s because it will keep replacing pods over and over again. In such a case, HPA might be a better solution for you.

However, using the VPA recommendations component will prove useful too, as it gives you valuable insights into resource usage in any case.

3. Cluster Autoscaler

Cluster Autoscaler changes the number of nodes in a cluster. It can only manage nodes on supported platforms – and each comes with specific requirements or limitations.

The autoscaler controller functions at the infrastructure level, so it requires permission to add and delete infrastructures. Make sure to manage these necessary credentials securely. One key best practice here is following the principle of least privilege.

When to use Cluster Autoscaler?

To manage the costs of running Kubernetes clusters on a cloud platform, it’s smart to dynamically scale the number of nodes to match the current cluster utilization. This is especially true for workloads designed to scale and meet the current demand.

How does Cluster Autoscaler work?

Cluster Autoscaler basically loops through two tasks. It checks for unschedulable pods and calculates whether it’s possible to consolidate all the currently deployed pods onto a smaller number of nodes.

Here’s how it works:

- Cluster Autoscaler checks clusters for pods that can’t be scheduled on any existing nodes.

- That might be due to inadequate CPU or memory resources. Another reason could be that the pod’s node taint tolerations or affinity rules fail to match an existing node.

- If a cluster contains unschedulable pods, the Autoscaler checks its managed node pools to determine whether adding a node would unblock the pod or not. If that’s the case, the Autoscaler adds a node to the node pool.

- Cluster Autoscaler also scans nodes in the node pools it manages.

- If it spots a node with pods that could be rescheduled to other nodes available in the cluster, the Autoscaler evicts them and removes the spare node.

- When deciding to move a pod, it factors in pod priority and PodDisruptionBudgets.

Tips for using Cluster Autoscaler

1. Make sure that you’re using the correct version

Kubernetes evolved rapidly, so following all the new releases and features is difficult. When deploying Cluster Autoscaler, make sure that you’re using it with the recommended Kubernetes version. You can find a compatibility list here.

2. Double-check cluster nodes for the same capacity

Otherwise, Cluster Autoscaler isn’t going to work correctly. It assumes that every node in the group has the same CPU and memory capacity. Based on this, it creates template nodes for each node group and makes autoscaling decisions on the basis of a template node.

That’s why you need to verify that the instance group to be autoscaled contains instances or nodes of the same type. And if you’re dealing with mixed instance types, ensure that they have the same resource footprint.

3. Define resource requests for every pod

Cluster Autoscaler makes scaling decisions on the basis of the scheduling status of pods and individual node utilization.

You must specify resource requests for each and every pod. Otherwise, the autoscaler won’t function correctly.

For example, Cluster Autoscaler will scale down any nodes that have utilization that is lower than the specified threshold.

It calculates utilization as the sum of requested resources divided by capacity. If any pods or containers are present without resource requests, the autoscaler decisions will be affected, leading to suboptimal functioning.

Make sure that all the pods scheduled to run in an autoscaled node or instance group have resource requests specified.

4. Set the PodDisruptionBudget for kube-system pods

Kube-system pods prevent the autoscaler from scaling down the nodes on which they’re running by default. If pods end up on different nodes for some reason, they can prevent the cluster from scaling down.

To avoid this scenario, determine a pod disruption budget for system pods.

A pod disruption budget allows you to avoid disruptions to important pods and make sure that a required number is always running.

When specifying this budget for system pods, factor in the number of replicas of these pods that are provisioned by default. Most system pods run as single instance pods (aside from Kube-dns).

Restarting them could cause disruptions to the cluster, so avoid adding a disruption budget for single instance pods – for example, the metrics-server.

Best practices for Kubernetes autoscaling

1. Make sure that HPA and VPA policies don’t clash

Vertical Pod Autoscaler automatically adjusts the requests and limits configuration, reducing overhead and achieving cost-savings. Horizontal Pod Autoscaler aims to scale out and more likely up than down.

Double-check that the VPA and HPA policies aren’t interfering with each other. Review your binning and packing density settings when designing clusters for business- or purpose-class tier of service.

2. Use instance weighted scores

Let’s say that one of your workloads often ends up consuming more than it requested.

Is that happening because the resources are needed? Or were they consumed because they were simply available, but not critically required?

Use instance weighted scores when picking the instance sizes and types that are a good fit for autoscaling. Instance weighting comes in handy especially when you adopt a diversified allocation strategy and use spot instances.

3. Reduce costs with mixed instances

A mixed-instance strategy forges a path toward great availability and performance – at a reasonable cost.

You basically choose from various instance types. While some are cheaper and just good enough, they might not be a good match for high-throughput, low-latency workloads.

Depending on your workload, you can often select the cheapest machines and make it all work.

Or you could run it on a smaller number of machines with higher specs. This could potentially bring you great cost savings because each node requires installing Kubernetes on it, which adds a little overhead.

But how do you scale mixed instances?

In a mixed-instance situation, every instance uses a different type of resource. So, when you scale instances in autoscaling groups and use metrics like CPU and network utilization, you might get inconsistent metrics.

Cluster Autoscaler is a must-have here. It allows mixing instance types in a node group – but your instances need to have the same capacity in terms of CPU and memory.

Check this guide too: How to choose the best VM type for the job and save on your cloud bill

Can you automate Kubernetes autoscaling even more?

Teams usually need a balanced combination of all three Kubernetes autoscaling methods. That way, workloads run in a stable way during their peak load and you keep costs to a minimum when facing lower demand.

CAST AI comes with several automation mechanisms that make autoscaling even more efficient:

- Smooth autoscaling – CAST AI makes sure that the number of nodes in use matches the application’s requirements at all times, scaling them up and down automatically.

- Headroom policy – when a pod suddenly requests more CPU or memory than the resources available on any of the worker nodes, the CAST AI autoscaler matches the demand by keeping a buffer of spare capacity.

- Spot fallback – this feature guarantees that workloads designated for spot instances have the capacity to run even if they’re temporarily not available. To keep workloads running, CAST AI provisions a temporary on-demand node and uses it to schedule the workloads.

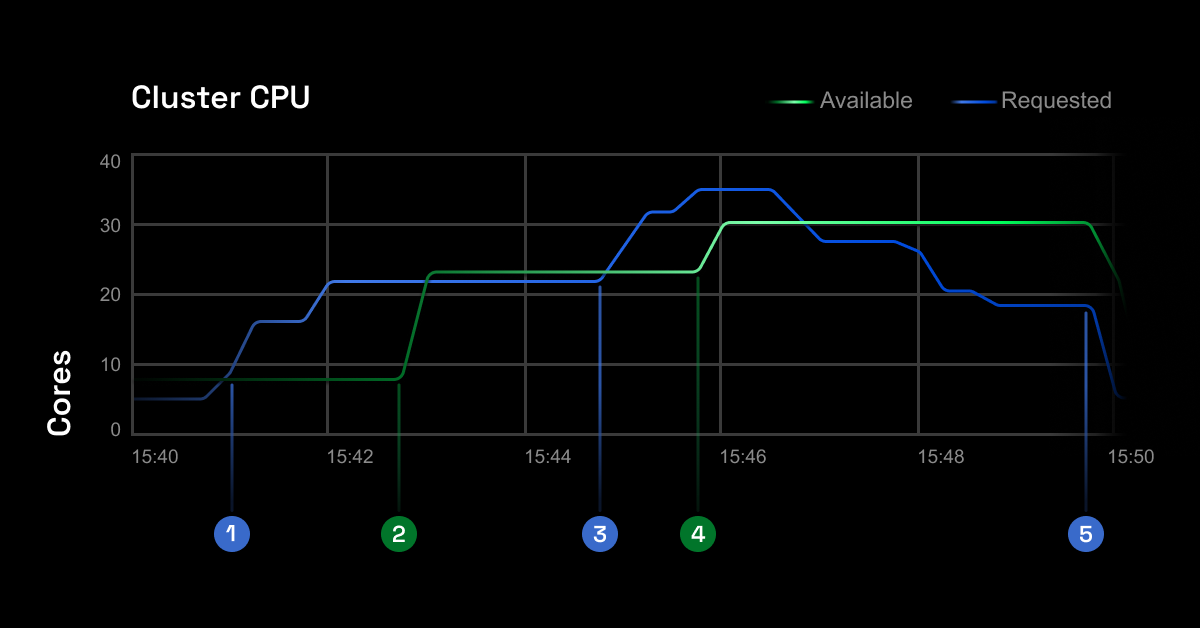

Here’s how closely the CAST AI autoscaler manages to follow the actual resource requests in your cluster.

Check how well your cluster is doing in terms of autoscaling and cost-efficiency by running the free savings report – it works with Amazon EKS, Kops, GKE, and AKS.

CAST AI clients save an average of 63%

on their Kubernetes bills

Book a call to see how much how you could save with cloud automation.

One of the most important characteristics of a Kubernetes cluster is autoscaling. It is a feature in which the cluster may increase the number of nodes as the demand for service response grows – and then reduce the number of nodes as the demand lowers.

Load balancing distributes the load equally across all the availability zones in a region while auto-scaling ensures that instances scale up or down in response to load.

Horizontal Pod Autoscaler alters the structure of your Kubernetes workload by increasing or reducing the number of pods in response to the workload’s CPU or memory usage – as well as custom metrics from within Kubernetes or external ones from sources outside of your cluster.

Cluster Autoscaler is a tool that allows you to increase or decrease the size of a Kubernetes cluster. This is done by adding or removing nodes based on the number of pending pods and node utilization metrics. The autoscaler nodes to a cluster whenever it identifies pending pods that can’t be scheduled because of resource shortages.

At the moment, Amazon EKS supports two autoscaling solutions: the Kubernetes Cluster Autoscaler and the open-source tool Karpenter. While the former uses AWS scaling groups, Karpenter works with the createFleet API.

You can scale up and down manually with the kubectl scale command.

You can change the number of pods managed by a ReplicaSet in two ways:

1. Edit the controller’s configuration using kubectl edit rs ReplicaSet_name. Next, you need to change the replicas count up or down as per your requirements.

2. Use kubectl – for example, to scale a ReplicaSet used to manage two pods instead of six.

Leave a reply

good angle on autoscaling

what’s the difference between VPA and HPA?

Hello, thank you for your question.

You can scale an application in two ways:

Vertically: by adding more resources (RAM/CPU/Disk IOPS) to the same instance,

Horizontally: by adding more instances (replicas) of the same application.

The problem with vertical scaling is that either the required hardware (RAM, CPU, Disk IOPS) in a single machine costs too much, or the cloud provider cannot provision a machine with enough resources.

We use replica sets in Kubernetes to achieve horizontal scaling. The Horizontal Pod Autoscaler allows automating the process of maintaining the replica count proportionally to the application load.

I’ve been using wrong k8s version with VPA and I can see that I’ve lost some potential savings.. Leeson learnt, update your software kids.

Combining all the auto-scaling methods in to one tool seems like peak performance worthy if everything works as smoothly as planned. My team found this really interesting and we are going to put cast at test

I’d love to see that CAST auto-scaler at work, in real time situation

Great tip on instance weighted scores, I bet that we have deployments where we use instances that could be replaced with more efficient ones, will definitely check that and update it

I don’t know how but our team overlooked HPA and VPA scaling clashes, and we spent so much time on thinking “why this kubernetes autoscaler just acts strange all the time” until one of our colleague had a bright idea to re-check the parameters..

There’s many guides on how to manage your kubernetes autoscaler but interestingly, I’ve found this one to be the easiest to understand and fallow trough as its straight to the point and it doesn’t go around in circles overly explaining this again and again..

Configuring VPA with updateMode: “Off” was a great kickstarter with load recommendations for the autoscaler to work his magic, I appreciate this tip, and I dont know how our team missed it at first…

Thanks for all of the tips in this article on kubernetes autoscaling, I was looking for something that would point me in the right directions and this surely did

getting my recommendations for VPA with updateMode: “Off” was crucial to begin with. Somehow I missed that on all articles that I’ve skimmed trough, thanks cast!

I’ve been looking for information as valuable as this for quite some time now and finally landed on CAST, thanks for this informative article!

We’ve been using Keda for quite some time and getting pretty good results, but as always, we are keeping one eye open to try out new things and I tihnk this is where CAST and its kubernetes automation comes to the play!

Thanks for the content which I found to be just in the middle of somewhat technical but also easy to chew on

Please reach me out by this comment email address, I’m interested to see this CAST autoscaler at work with our environments

Really in depth guide, easy to read and follow!

We’ve just started using Kubernetes solely for the autoscaling features that it offers with some tools and I got to say that we didint expec as good result as we got. Our spendings litteraly just crashed like stock market and now we save around 40% more than we did before wihtout kubernetes, I guess you could say that we were a bit behind on things…