Bonus Material: 1-page container service comparison table for AWS (printable)

We all love containers for their scalability. But that might easily become your overhead if you end up managing a large cluster.

This is where container orchestration comes in. When operating at scale, you need a platform that automates all the tasks related to the management, deployment, and scaling of container clusters.

There’s a reason why almost 90% of containers are orchestrated today.1

If you’re using Kubernetes on AWS, there are several options you can choose from:

- Elastic Container Service (ECS),

- Elastic Kubernetes Service (EKS),

- AWS Fargate,

- kOps for AWS.

Read on to find out which one is the best match for your workloads.

And if you know what’s what in the world of AWS Kubernetes, you could still probably use a few best practices to reduce your cloud bill.

Note: The world of cloud technology changes rapidly, and we update this article regularly to keep up. Last update: 14.01.2022.

What is Elastic Container Service (ECS)?

AWS ECS stands for AWS Elastic Container Service. It’s a scalable container orchestration platform owned by AWS. It was designed to run, stop, and manage containers in a cluster. The containers themselves are defined here as part of task definitions and driven by ECS in the cloud.

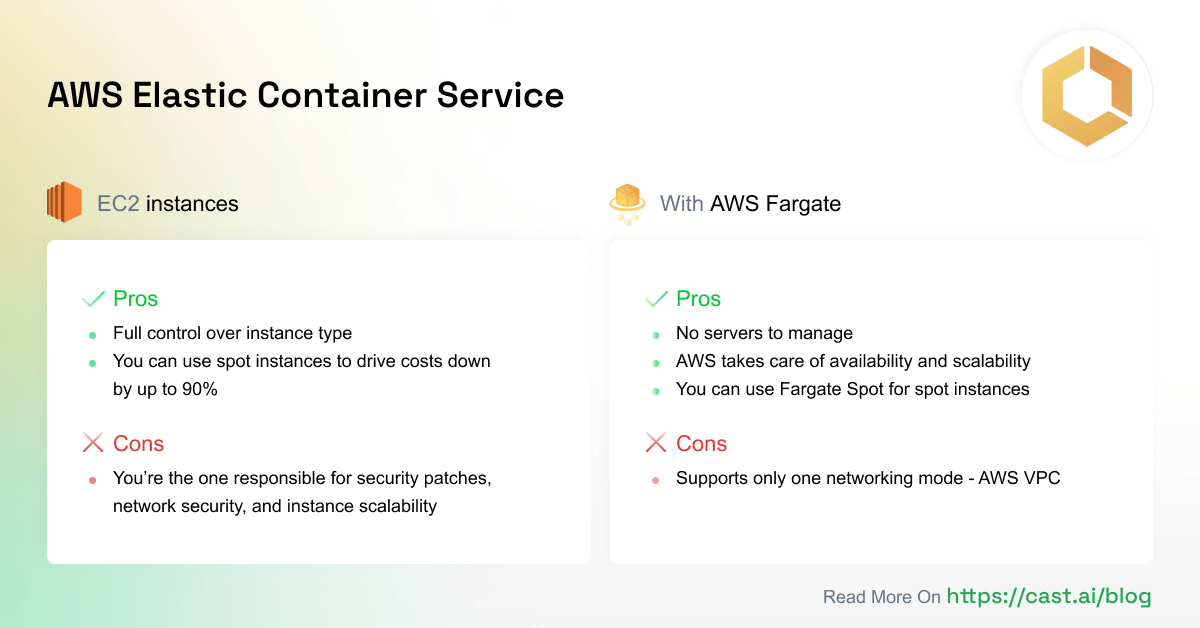

You can use ECS with EC2 instances (best for long-running tasks) or AWS Fargate (good for serverless tasks). Let’s take a closer look at these two options:

ECS with EC2 instances

In this model, containers are deployed to EC2 instances (VMs) created for the cluster. ECS manages them together with tasks that are part of the task definition.

Pros:

- You have full control over the type of EC2 instance used here. For example, you can use a GPU-optimized instance type if you need to run training for a machine learning model that comes with unique GPU requirements.

- You can take advantage of spot instances that reduce cloud costs by up to 90%.

Cons:

- You’re the one responsible for security patches and network security of the instances, as well as their scalability in the cluster (but thankfully, you can use Kubernetes autoscaling for that).

Cost: You’re charged for the EC2 instances run within your cluster and VPC networking.

ECS with AWS Fargate

In this variant, you don’t need to worry about EC2 instances or servers anymore. Just choose the CPU and memory combo you need, and your containers will be deployed there.

Pros:

- No servers to manage.

- AWS is in charge of container availability and scalability. Still, better select the right CPU and memory – otherwise, you risk that your application becomes unavailable.

- You can use Fargate Spot, a new capability that can run interruption-tolerant ECS Tasks at up to a 70% discount off the Fargate price.

Cons:

- ECS + Fargate supports only one networking mode – awsvpc – which limits your control over the networking layer (and you might need that in some scenarios).

Cost: You’re charged based on the CPU and memory you select. The amount of CPU cores and GB determines the cost of running your cluster.

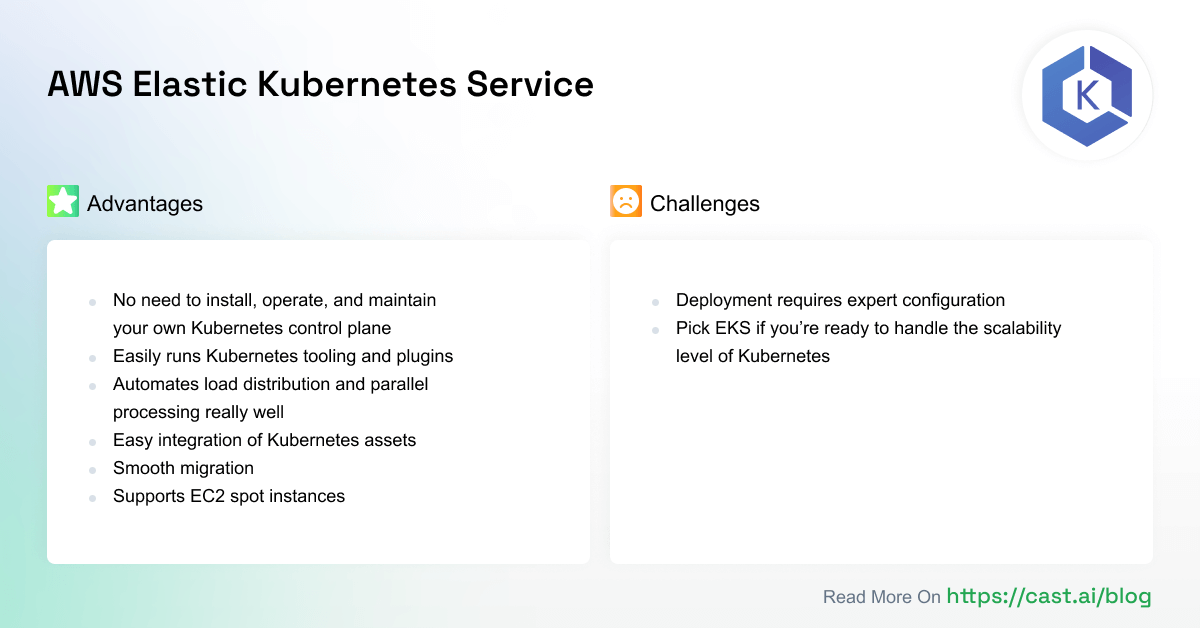

What is Elastic Kubernetes Service (EKS)?

EKS is a service that provides and manages a Kubernetes control plane on its own. You have no access to the master nodes on EKS since they’re under a special AWS account.

To run a Kubernetes workload, EKS establishes the control plane and Kubernetes API in your managed AWS infrastructure and you’re good to go.

At this point, you can deploy workloads using native K8s tools like kubectl, Kubernetes Dashboard, Helm, and Terraform.

Advantages of AWS EKS

- You don’t have to install, operate, and maintain your Kubernetes control plane.

- EKS allows you to easily run tooling and plugins developed by the Kubernetes open-source community.

- EKS automates load distribution and parallel processing better than any DevOps engineer could.

- Your Kubernetes assets integrate seamlessly with AWS services if you use EKS.

- EKS uses VPC networking.

- Any application running on EKS is compatible with one running in your existing Kubernetes environment. You can migrate to EKS without applying any changes to the code.

- Supports EC2 spot instances using managed node groups that follow spot best practices and allow some pretty great cost savings.

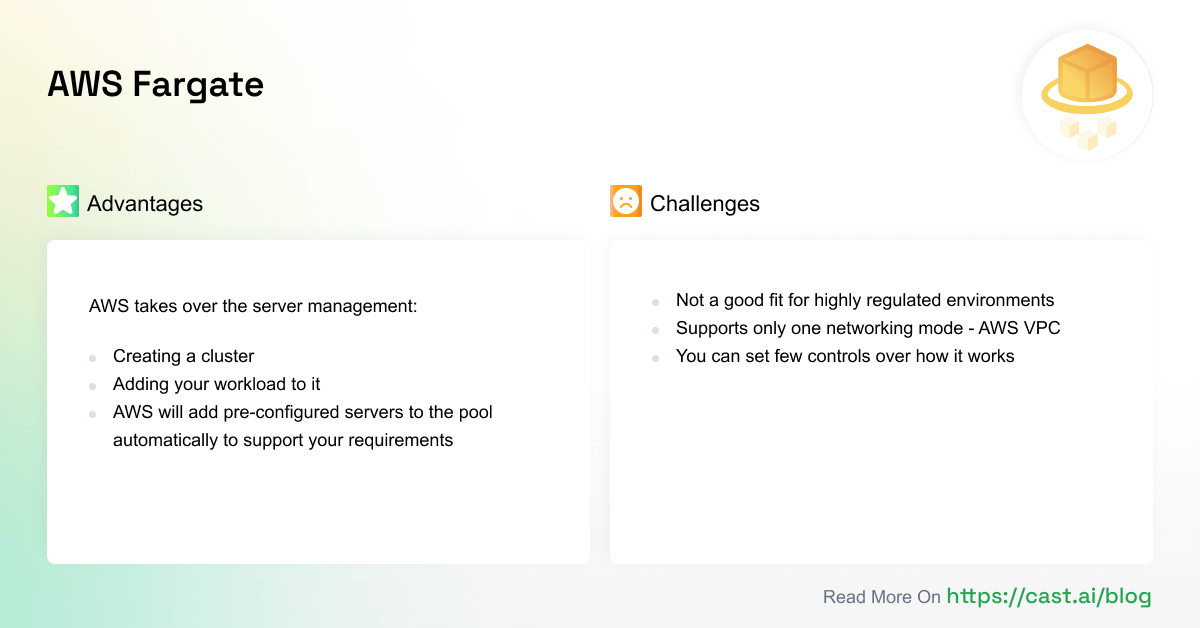

What is AWS Fargate?

Usually, a container management platform reworks a server’s CPU and memory to allocate them to your workloads better. But the underlying server is still there – just divided in a different way. And it might become a burden in managing your systems.

AWS solves this problem with Fargate by taking over the management of that underlying server.

Instead of doing all the tasks yourself – from booting a server and installing the agent to making sure that it’s up-to-date – you can simply create a cluster and add your workload to it. AWS will add pre-configured servers to the “pool” automatically to support your requirements.

Today, 32% of AWS container environments run on Fargate.2

Here are a few things you should know about Fargate before jumping on the Fargate-managed bandwagon:

- It’s not a good fit for highly regulated environments where companies use dedicated tenancy hosting.

- The combination of ECS and Fargate supports only one networking mode (awsvpc) that comes with limitations if you need to have deep control over the networking layer.

- Fargate allocates resources automatically, but you can set a few controls over how it works. This can easily lead to uncontrolled cost rise if you fail in close monitoring (for example, in R&D environments). One way to deal with that is through self-hosting and creating limited-capacity clusters.

What is kOps?

kOps is short for Kubernetes Operations (also spelled kops or Kops), a set of tools AWS offers for installing, operating, and deleting Kubernetes clusters.

You can also use it to roll out an upgrade of an older version of Kubernetes to a new one and manage cluster add-ons. After creating a cluster, you can use the usual kubectl CLI to manage resources.

To quote the documentation:

kOps will not only help you create, destroy, upgrade and maintain production-grade, highly available, Kubernetes cluster, but it will also provision the necessary cloud infrastructure.

Key features of kOps:

- kOps simplifies Kubernetes cluster setup and management, especially in comparison to the amount of effort needed to manually set up master and worker nodes.

- It automates the provisioning of Highly Available Kubernetes clusters.

- The tool manages Route53, AutoScaling Groups, ELBs for many different tasks from master and node bootstrapping to the API server and rolling updates to your cluster.

- It’s multi-architecture ready and supports ARM64.

- It supports multiple cloud platforms, which makes it a better choice if you’re afraid of getting locked into a specific Kubernetes offering.

- It’s an open-source tool, so you can use it for free. However, you still need to pay for and maintain the underlying infrastructure created by kOps to manage your cluster.

kOps vs. EKS – Key similarities and differences

Quickly see all the four services side by side in this 1-page comparison table:

Setting up a Kubernetes cluster

EKS

Setting up a cluster in EKS is relatively complicated and comes with some prerequisites. You need to set up and use the AWS CLI and aws-iam-authenticator, and set up IAM permissions and users, which adds to your workload.

EKS doesn’t create worker nodes automatically, so you’re also in charge of managing that process. You also need to make extra effort to set up EKS with CloudFormation or Terraform.

kOps

kOps does the job much faster. It’s a CLI tool you need to install on your local machine together with kubectl. To get a cluster running, use the kOps create cluster command. The great thing about kOps is that it manages most of the AWS resources you need for your Kubernetes cluster.

Contrary to EKS, kOps creates master nodes as EC2 instances – you can always access them directly to make modifications around the networking layer, size, etc. You can monitor these nodes directly too.

Managing a Kubernetes cluster

EKS

- EKS comes with a well-defined way of upgrading the control plane with minimized disruption. You can then update worker nodes using the newer Kubernetes AMI, create a new worker group, and finally migrate your workload to the new nodes.

- Scaling up the cluster is simple and based on adding more worker nodes. The control plane is fully managed, so there’s no need to worry about adding or upgrading master node sizes when your cluster grows.

- Carrying out the most common maintenance tasks is easy in EKS – you can add worker nodes, replace them, terminate instances, and upgrade your setup with minimal disruption to your cluster.

kOps

- kOps lets you create a cluster really fast, but managing it is more complicated. There’s much groundwork to cover for upgrading and replacing master nodes for new Kubernetes versions. Creating a new cluster in a new VPC with kOps might be easier than that.

- When it comes to maintenance tasks like adding, replacing, and upgrading worker nodes, kOps performs quite similarly to EKS. AutoScaling Groups come in handy here.

Security

EKS

- With EKS, you get to enjoy the Amazon EKS Shared Responsibility Model, meaning that you’re not left alone in securing the control plane.

- The platform may be relatively new, but you can count on AWS’ multiple years of security expertise.

- Note that EKS clusters have limited administrator access via IAM by default. Managing permissions with IAM is a piece of cake if you use other AWS services that rely on it as well.

- Setting up encrypted root volumes and private networking is easy.

- The AWS account you use for EKS doesn’t have root access to the master nodes for the cluster, so that’s another layer of protection (you’re not likely to open up your master nodes to SSH access from the internet by accident).

kOps

- While still benefiting from the Amazon Shared Responsibility Model in connection with EC2 and other services, you don’t get that extra security support for the control plane.

- A cluster created with kOps gets private networking, encrypted root volumes, and security group controls. You can also set up IAM authentication like in EKS.

- kOps also creates security groups and combines them with private networking to make your cluster more secure.

AWS EKS vs. ECS – Similarities

1. They both have a layer of abstraction

EKS and ECS come with a layer of abstraction for containers called deployments (EKS) or tasks (ECS).

Their functionalities are quite similar. Both ECS and EKS have an abstraction called cluster – a combination of all the working components.

2. They allow a mix of AWS compute platforms

Whether you’re running your containers with ECS and EKS, you can choose one or more AWS compute options:

- EC2 Instances – virtual machines that offer a wide range of options and capacities.

- AWS Fargate – for serverless applications.

- AWS Outposts – a fully managed service that offers the same AWS infrastructure, services, APIs, and tools for data centers or on-premises facilities to make hybrid setups consistent. Great for workloads that need low latency access to on-premises systems or local data processing.

- AWS Local Zones – a kind of AWS infrastructure deployment that locates compute, storage, database, and other services closer to a specific population, industry, or IT centers. Perfect for applications that you want running closer to the end-users.

- AWS Wavelength – this infrastructure is optimized for mobile edge computing applications that help to avoid the latency resulting from application traffic having to go through multiple hops across the Internet to reach the destination. A great pick for low-latency, mobile edge applications.

How to choose the best VM type? Consider your selection across these four dimensions:

- performance,

- cost,

- availability,

- reliability.

Understanding the differences between all these options is essential because they come with different financial commitments and complexity in management.

3. You don’t need to monitor or operate them

These managed services eliminate the effort in operating services and allow your teams to focus on core applications.

You can easily assume that they’re reliable and highly available at all times. The Kubernetes control plane and API will be up and running no matter what – even when updating to the latest release (naturally, this happens automatically as well).

4. They share the approach to security

To access services and resources securely, AWS provides the Identity and Access Management (IAM) solution. You can create users (and user groups) – and then assign permissions to them.

This control system is available in both ECS and EKS. For example, you can use IAM to limit who can access ECS tasks or Kubernetes workloads.

AWS EKS vs. ECS – Differences

Summary:

Pricing

- ECS – ECS is free of charge and you only pay for the compute costs. It’s a good match for those who are starting to explore microservices and containers.

- EKS – the solution costs $0.1 per hour per Kubernetes cluster (c. $74 per month) + compute costs. Pick it if you’re ready to handle Kubernetes scalability.

Deployment

- ECS – simple to deploy, no control plane, configuration, and deployment directly from the AWS management console. Requires less expertise and operational knowledge.

- EKS – more complex deployment as you need to configure and deploy pods via Kubernetes first. Requires expert skills.

Multi cloud portability

- Both ECS and EKS are AWS proprietary technologies and present a potential risk of vendor lock-in.

Networking

- ECS – limited number of ENIs per instance. Might not be enough to support all the containers you want running on a particular instance.

- EKS – greater flexibility, you can share an ENI between multiple pods and place more pods per instance.

Community support

- ECS – limited community assistance and corporate AWS support.

- EKS – plenty of community support and resources.

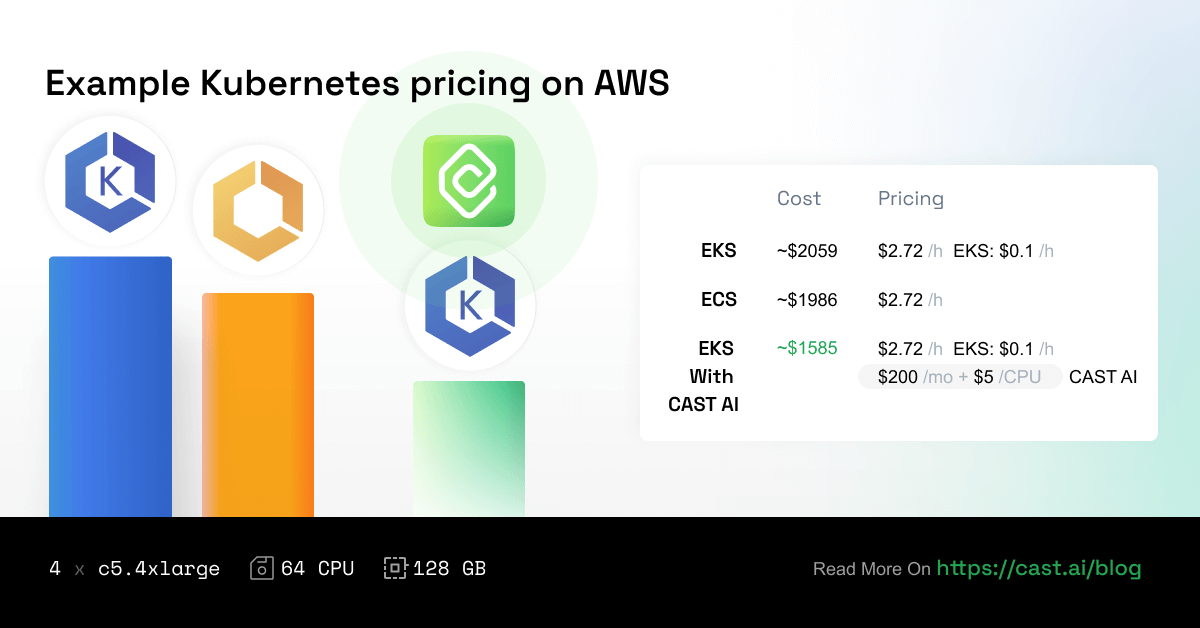

1. Pricing

In general, if you run ECS and EKS clusters on EC2 instances, you’ll be paying for compute costs that depend on the instance type you pick and its running time.

ECS doesn’t come with any additional charges, but EKS does.

EKS will charge you $0.1 per hour per Kubernetes cluster. This amounts to c. $74 per month, which doesn’t seem like a lot. But the costs might add up quickly depending on your setup.

If you’re just exploring microservices and containers, ECS is a better option. And if you’re ready to handle the scalability level of Kubernetes, the $74 extra on your bill isn’t going to make much difference against your overall compute costs.

On the other hand, EKS enables you to use Kubernetes cost monitoring and automated cost optimization with CAST AI that typically cuts around half of your cluster costs, here’s how: How to reduce your Amazon EKS costs by half in 15 minutes.

Interested in other cloud vendors’ prices? Check Cloud Pricing Comparison: AWS vs. Azure vs. Google Cloud Platform in 2022.

2. Deployment

You can set up both EKS and ECS from the AWS management console. But then things start looking different.

ECS is really simple to deploy. After all, it was designed to be a simple API for creating containerized workloads without any complex abstractions. You get no control plane, so once your cluster is set up, you can configure and deploy tasks directly from the AWS management console.

Deploying clusters on EKS is a bit more complex and requires expert configuration. You need to configure and deploy pods via Kubernetes first because EKS is just another layer for creating K8s clusters on AWS.

So compared to ECS, you need more expertise and operational knowledge to deploy and manage applications on EKS.

3. Multi cloud portability

The ideal scenario is to move your workloads from one cloud provider to another with minimal disruption. This is what portability is all about. To achieve it, you need interoperability among cloud services.

While ECS is an AWS proprietary technology, EKS is based on Kubernetes, which is open-source.

Kubernetes in EKS allows you to package your containers and move them to another platform quickly. ECS might lock you in.

So if you build an application in ECS, you’re likely to encounter the vendor lock-in issue in the long run. And if you choose to design your application on Kubernetes, you can basically run it on any other Kubernetes cluster – from the cloud to on-premises.

4. Networking

In ECS, you use the awsvpc network that receives an elastic network interface (ENI) attached to the container instance hosting it.

You’ll be looking at default limits to the number of network interfaces that can be attached to an EC2 instance (the primary network interface counts as one). Just to give you an idea, a c5.large instance may have up to 4 ENIs attached to it by default.

In ECS, the maximum number of ENIs you can assign varies by EC2 type. Even though AWS increased the limits, this might not be enough to support all the containers you want running on that particular instance.

Note that ECS supports launching container instances with larger ENI density using specific EC2 types. When you pick such a type and opt into the awsvpcTrunking account setting, you’ll get some additional ENIs on newly launched container instances. So, you can place more tasks using the awsvpc network mode on every container instance.

With EKS, you can assign a dedicated network interface to a pod to improve security. All the containers inside that pod will share the internal network and public IP.

You can share an ENI between multiple pods, which allows you to place more pods per instance.

5. Community support

The open-source Kubernetes rules over proprietary ECS here. The latter offers limited community assistance, so you can only count on the corporate support of AWS.

When running Kubernetes in EKS, you get:

- community support (Stack Overflow, Github issues),

- resources (from official training to online courses),

- community-maintained tools like kubectl extensions, Helm Charts, or Kubernetes Operators.

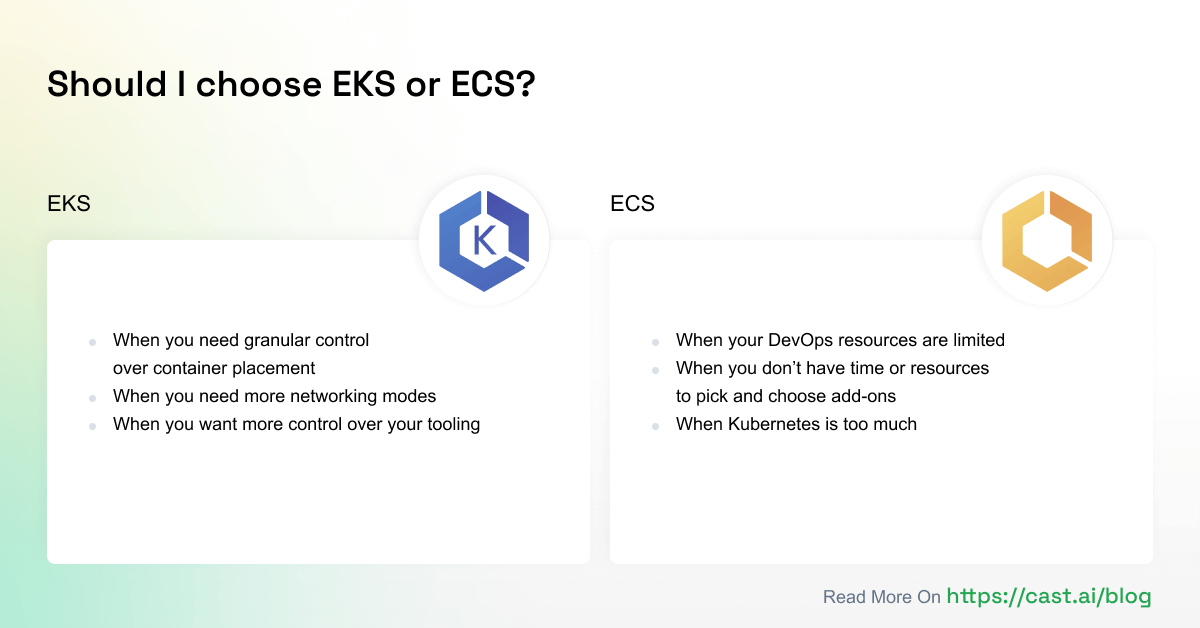

When to choose EKS?

For some teams, ECS proves to be too simple and comes with limitations that EKS doesn’t have. So, when should you select EKS?

- When you need granular control over container placement – ECS doesn’t have a concept similar to pods. So, if you need fine-grained control over container placement, you’d better go somewhere else.

- When you need more networking modes – ECS has only one networking mode available in Fargate. If your serverless app needs something else, EKS is a better choice.

- When you want more control over your tooling – ECS comes with a set of default tools. For example, you can use only Web Console, CLI, and SDKs for management. Logging and performance monitoring are carried out using CloudWatch, service discovery through Route 53, and deployments via ECS itself. If you don’t like any of these tools, go for EKS.

- When you want more control over your costs – EKS allows you to control cloud expenses via dedicated automated platforms like CAST AI, which is beneficial if you’re running a larger operation.

Already running K8s on ECS? Here’s how to migrate from ECS to EKS.

When to choose ECS?

Some teams might benefit from ECS more than EKS thanks to its simplicity. Here’s when selecting ECS makes the most sense:

- When you have limited DevOps resources – ECS comes with a gentle learning curve, so if you’re not prepared to re-architect your applications around Kubernetes concepts, ECS will be easier to adopt.

- When you don’t have time or resources to pick and choose add-ons – Kubernetes and EKS, by extension, is a far more flexible tool. You can choose from many different add-ons available in the system. But each of them requires time, resources, and maintenance to make the most of it. ECS has only one option in each category – if it works for you, you’re good to go.

- When Kubernetes is too much – If adopting K8s all at once is a little too much for you, ECS could be a good first step. It allows you to try your hand at containerization and move your workloads into a managed service without a huge upfront investment.

Wrap up

If the flexibility of moving across different cloud vendors isn’t that important to you and you’re happy to put all of your eggs in the (AWS) basket, using ECS or EKS (with or without Fargate) makes a lot of sense.

But if you’d like to have the freedom to integrate with the open-source Kubernetes community, putting in the energy and time into kOps is worth it. And there are plenty of cloud-native solutions to help you along the way.

To compare EKS, ECS, Fargate, and kOps side by side and have something to refer to in the future, download this 1-page comparison table:

See CAST AI in action

End those massive cloud bills! Learn how to cut 50%+ automatically

FAQ

Amazon Web Services offers two Kubernetes-related services: Amazon Elastic Container Server (ECS) and Amazon Elastic Kubernetes Service (EKS).

While ECS is a container orchestration service, EKS is a Kubernetes managed service. ECS is a scalable container orchestration platform that allows users to run, stop, and manage containers in a cluster. EKS, on the other hand, helps teams to build Kubernetes clusters on AWS without having to install Kubernetes on EC2 compute instances manually.

Many companies across various industries use Amazon EKS to run their Kubernetes clusters. Among them, you can find HSBC, Amazon.com, GoDaddy, Delivery Hero, and Mercari.

Amazon ECS is not similar to pure Kubernetes because it offers a number of extra features that make running Kubernetes easier. That’s why it’s such a great solution for beginner Kubernetes users.

The interesting part is that you can use ECS with EC2 instances or AWS Fargate. While the former works best for long-running tasks, AWS Fargate comes in handy for serverless tasks.

EKS is a Kubernetes managed service, whereas ECS is a container orchestration service. ECS is a scalable container orchestration solution for running, stopping, and managing containers in a cluster. EKS, on the other hand, assists teams in deploying Kubernetes clusters on AWS without the need to manually install Kubernetes on EC2 instances.

Here are a few key differences between ECS and EKS:

– While ECS doesn’t come with any extra charges, EKS does – $0.1 per hour per Kubernetes cluster.

– Deploying clusters on ECS is much easier than on EKS since the latter requires expert configuration.

– While ECS is a proprietary technology, EKS is based on Kubernetes, an open-source technology. That’s why it also offers more help from the community. If you go for ECS, you have only the corporate support of AWS at your disposal.

What about Fargate? AWS Fargate managed the underlying server used to run Kubernetes on ECS. Users no longer need to boot a server and install the agent to ensure that everything is up to date. AWS adds a number of pre-configured servers to the “pool” automatically in order to support these requirements.

Users of ECS can take advantage of Amazon EC2 instances or AWS Fargate. Using EC2 instances makes sense if you expect to maximize cluster utilization. Fargate comes in handy when utilization falls under specific thresholds. If performance efficiency is important to you, then your ECS setup would benefit more from the EC2 launch because it offers you several configuration possibilities (like disk type or GPU).

AWS can publish EKS-optimized Amazon Machine Images (AMIs) that come with the necessary worker node binaries – Docker and Kubelet. The Amazon Machine Image will be updated on a regular basis and include the latest version of these components.

AWS Fargate is a container serverless compute engine that integrates with two platforms: Amazon Elastic Container Service (ECS) and Amazon Elastic Kubernetes Service (EKS). So, you can use Fargate without ECS – assuming that you will be using it with EKS. Thanks to that, you will no longer have to worry about provisioning and managing servers on your own.

Leave a reply

that answers my question, great article

I prefer EKS, though it’s nice to have a comparison

as a beginner I liked the FAQ in the end, helps to catch up with the article and fill the gaps. great post too, it really helps to find out the differences between eks ecs and fargate

Hi, glad this helps! Feel free to throw us any suggestions you think may be useful to have.

it looks promising and all but honestly there is no way to know that this EKS analyzer won’t mess up my cluster

Hi, thank you for your comment. CAST AI agent will use read-only mode to analyze your cluster and generate a report of possible savings. We encourage you to check service account permissions for the agent and ask away any questions you may have about it. Only when you decide that you want to try out cost optimization – our team of engineers will work with you to implement our tool and ensure that everything is running smoothly.

I guess moving from Fargate or EC2 to EKS is beneficial with a little learning curve. The future moves with kubernetes, so does the companies that shift to EKS

If you are already using K8s, it would be sensible to go with everything that is tailored around it, so EKS it is.

I love those faqs that you have at the end of some other articles, you should add one here too since its a lengthy one

EKS seems to be a no brainer for the guys that want to use kubernetes but not actually take that much care of k8s while working on other more important things

We’ve been using ecs but the fact that we need to update the security patches and manage out scalability does eat a lot of our time out of the pocket and most of the time at bad moments, when we are near the crunching end of a feature or a quarter..

The fact that we don’t have to monitor them as much as just deploying without any environment with kubernetes is astonishing and such a life saver..

I can see that you guys only have EKS cluster attachment, pretty sad for me as I was looking for ECS cluster cost optimizations. I’ll reach out to check on whether you guys are planning it for the future

since we started out with Fargate, and since we scaled out a bit, I’ve been thinking lately about EKS.. but the fact of moving everything to a different environment and the labor itself to make everything work again as smoothly as possible is just a bit too much for us right now

This guide correctly pointed out that EKS is for people that need that control and can handle the underlying difficulties of setting everything up a bit more manually. But thats what allows you to optimize the cluster even more, that control, that ECS or Fargate lacks..

The tips you’ve provided are really helpful and I’ll surely implement these in my article.

Very Interesting and precise comparison analysis. Helpful for engineers to carefully craft the solution architecture stack.

Thank you for an excellent article.

Thanks for the awesome article

Great article. Helped clarify how these similar AWS services fit.

I use ECS for long time and still prefer over K8s, we don’t have only a K8s to admin we have a lot of other things to do, some people think that SRE should use a day manage k8s…. doesn’t make much sense for me, I use ECS Fargate for small and big projects without any big issue to scale for exemple.

This is a very well written article!

All depends on your distributed application’s use case. I find Kubernetes eats up too much of development time with the complexity. Plus multiple pods per node has shown to be a big issue for our use case, we need big nodes that run one container frequently (which we right size with EC2’s offerings per app).

Both have tradeoffs, Kubernetes is ultra popular for good reason, but ECS nails it out of the park for our use case.

Whatever works best for you is the best option 🙂