The 2024 Kubernetes Cost Benchmark Report underscores organizations are struggling to manually manage cloud-native infrastructure, provides best practices to cut cloud costs

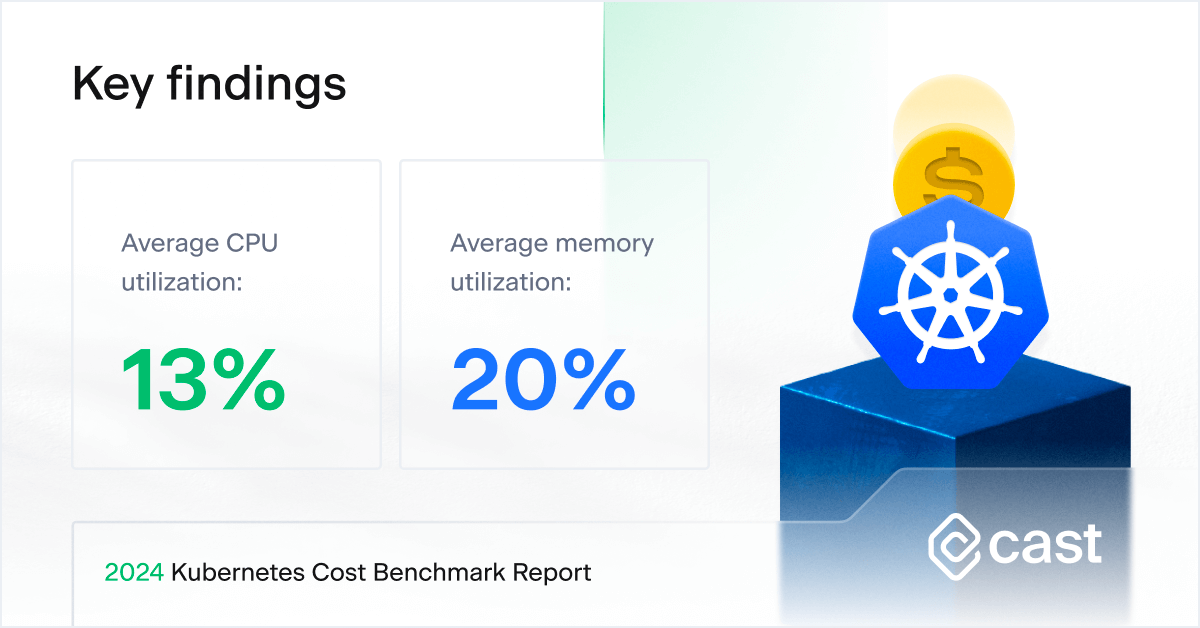

CAST AI, the leading Kubernetes automation platform, today published its second annual Kubernetes Cost Benchmark Report, which highlights Kubernetes cost optimization trends to help companies reduce their cloud costs. The analysis found that for clusters containing 50 or more CPUs, organizations only utilize 13% of provisioned CPUs, and 20% of memory, on average.

The report’s findings are based on CAST AI’s analysis of 4,000 clusters running on Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure (Azure) between Jan. 1 and Dec. 31, 2023, before they were optimized by the company’s automation platform. Key findings of the report include:

- For larger clusters containing 1,000 or more CPUs, organizations on average only utilize 17% of provisioned CPUs.

- CPU utilization varies little between AWS and Azure; they both share nearly identical utilization rates of 11%. Cloud waste is lower on Google, at 17%. For memory, utilization differences are lower across providers: GCP (18%), AWS (20%), and Azure (22%).

- Spot instance pricing across AWS’ six most popular instances for US-East and US-West regions (excluding Gov regions) increased 23% between 2022 and 2023.

- The biggest drivers of overspending include:

- Overprovisioning: Allocating more computing resources than necessary to an application or system.

- Unwarranted headroom: Requests for the number of CPUs are set too high.

- Low Spot instance usage: Many companies are reluctant to use Spot instances due to concerns over perceived instability.

- Low usage of “custom instance size” on GKE – It is difficult to choose the best CPU and memory ratio, unless the selection of custom instances is dynamic and automated.

The report also offers a series of best practices to help organizations reduce cloud costs and maximize return on investment while maintaining performance.

“This year’s report makes it clear that companies running applications on Kubernetes are still in the early stages of their optimization journeys, and they’re grappling with the complexity of manually managing cloud-native infrastructure,” said Laurent Gil, co-founder and chief product officer, CAST AI. “The gap between provisioned and requested CPUs widened between 2022 and 2023 from 37 to 43 percent, so the problem is only going to worsen as more companies adopt Kubernetes.”

About the 2024 Kubernetes Cost Benchmark Report

To view other insights from the report, please visit https://cast.ai/kubernetes-cost-benchmark/. The report is based on CAST AI’s analysis of 4,000 clusters running on Amazon Web Services, Google Cloud Platform, and Microsoft Azure between Jan. 1 and Dec. 31, 2023, before they were optimized by the company’s automation platform. This year, CAST AI expanded the report to include an analysis of CPU and memory utilization, comparing provisioned, requested, and utilized resources. CAST AI excluded clusters with less than 50 CPUs from its analysis.

About CAST AI:

CAST AI is the leading Kubernetes automation platform that cuts AWS, Azure, and GCP customers’ cloud costs by over 50%. CAST AI goes beyond monitoring clusters and making recommendations. The platform utilizes advanced machine learning algorithms to analyze and automatically optimize clusters in real time, reducing customers’ cloud costs, and improving performance and reliability to bolster DevOps and engineering productivity.

Learn more: https://cast.ai/

Media and Analyst Contact

Erika Rosenstein

Director of PR and Analyst Relations

[email protected]

Let’s chat

Do you have any questions about CAST AI? Get in touch with our media department.